It is shown that the rate of convergence at the final stage can be arbitrarily slow. Our experiments reveal a different picture. None of these papers mentions any complication or difficulty. Former reports on the performance of Mangasarian’s SOR method are very encouraging and enthusiastic. The reason that we prefer to deal with ( 1.1) instead of ( 7.8) lies in the simple geometric interpretation of ( 1.1). This constitutes a major difference between the two schemes. Moreover, unlike our method it does not store or update the primal vector x k. However, the sparsity-preserving version is not a row-relaxation scheme that uses one row at a time. A more detailed description of the geometry of the confidence ellipsoid and its relationship with these optimality criteria can be found in Papakyriazis 113 (p 356) or Rawlings et al. A matrix X that minimizes the maximal eigenvalue of ( X T X) −1 is said to be E-optimal. Finally, the maximal eigenvalue of ( X T X) −1 is the longest principal axis of the uncertainty ellipsoid and is related to how much large are the variance of the coefficients as a global. For a given volume of the ellipsoid (given by det), the minimum tr is achieved when all the elements c kk are equal, which corresponds to a spherical confidence region (i.e., the estimations of the coefficients are uncorrelated). A matrix X that minimizes tr( X T X) −1 is said to be A-optimal. A similar criterion, which is also related to the shape of the ellipsoid, is given by tr, which is related to the average variance of the coefficients. Hence, a D-optimal design minimizes the volume of the confidence region. For a given number of observations I, the design matrix with the smallest det( X T X) −1 (when these measures must be compared, the σ 2 is not considered because it is assumed to be constant) (or, a maximum det( X T X)) is said to be D-optimal. A small det implies a small volume and a large precision of the coefficients. Hence, det is a scalar measure of the generalized variance of the least squares estimate of β.

And the volume of the ellipsoid is proportional to the product of the eigenvalues of ( X T X) −1, that is, it is proportional to det. Moreover, large correlations among the columns of X (collinearity) will be translated into large differences between the largest and the smallest eigenvalues of X and, hence, into very different ellipsoid axis lengths (a narrow and long ellipsoid). Next, the half-length of the axis is dλ a′ −1/2 = dλ a 1/2, which means that a large ( X T X) −1 will be translated into large eigenvalues, and, in turn, into a large ellipsoid. Hence, the axes of the ellipsoid are oriented in the direction of the eigenvectors of ( X T X) −1. First, we must note that X T X and ( X T X) −1 have the same eigenvectors, and that their eigenvalues are related as λ a′ = λ a −1. This is translated into the confidence ellipsoid as follows.

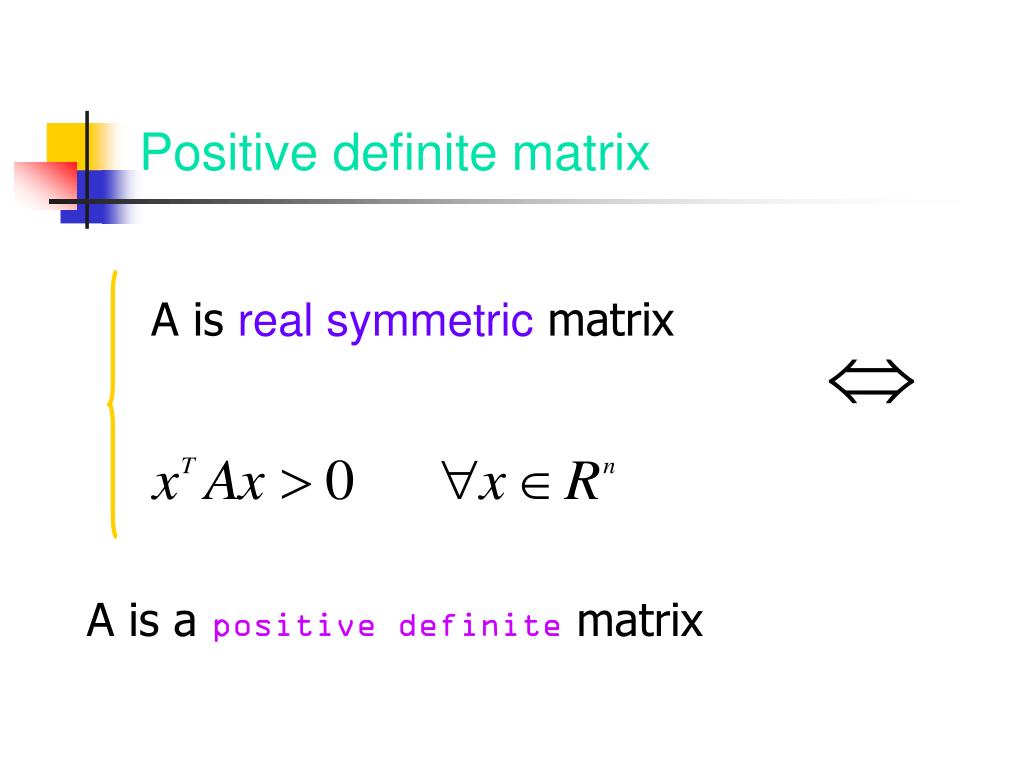

Large values in ( X T X) −1 imply large variances and covariances. The importance of this matrix is better seen in terms of the dispersion matrix ( X T X) −1, which is used for calculating the variances and covariances of the coefficients. 1.( β − b ) T X T X ( β − b ) = K s 2 F K, ( I − K ), 1 − αĪnd making A = X T X, d 2 = Ks 2 F K,( I − K),1 − α, it is seen that Equation (49) defines an ellipsoid whose shape and volume depend on the design matrix X. \(\MATRIX X\) is called the Shur complement of \(A\) in \(C\). Positive semi definite (written as \(M \ge 0\)) if for all \(x \in \reals^n\), \(x \neq 0\), we have \ Positive definite (written as \(M > 0\)) if for all \(x \in \reals^n\), \(x \neq 0\), we have \ A \(n \times n\) symmetric matrix \(M\) is called

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed